Can We Trust the Systems?

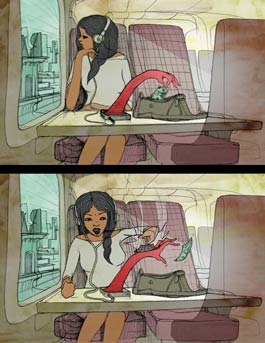

Lehigh researchers across numerous disciplines are working to thwart hackers and data thieves, with the ultimate goal of creating a world of trustworthy computing. Illustration by Will Barras.

You’re sick and can’t get to a doctor, so you post a question about your symptoms on an online health forum. In doing so, you’ve left a digital footprint.

You’re hungry and in an unfamiliar neighborhood, so you use your smartphone to search for nearby restaurants. Another footprint.

You frequent a particular online vendor, so you store your credit card information in your account to expedite checkout. Yet another footprint.

Regardless of how you’ve used your computer or mobile device, when you enter information into a website, search engine or social media platform, you’ve left a digital breadcrumb of sorts that can potentially be seen or tracked by others.

“We have left so many footprints on the Internet, and we have no idea what type of information we have left,” says Ting Wang, a Lehigh assistant professor of computer science and engineering. “[And] if someone wants to dig in, they can find out.”

There’s no shortage of digging going on, either. When former National Security Agency subcontractor Edward Snowden leaked classified information in 2013 about the NSA’s surveillance activity, he triggered a global conversation about privacy in an age where most everything, including our personal data, is digitized.

“Privacy, whether it’s abused by attackers or whether it’s abused by our own government, is a very big concern,” says Daniel Lopresti, professor and chair of computer science and engineering and director of Lehigh’s Data X Initiative. “So privacy and security go together hand in hand, and the bigger rubric is trustworthy computing—can we trust the systems?”

Today, individuals with malicious intent operate from anywhere in the world, attempting to access and manipulate software, networks, physical systems and the data stored within each of them. The protection of personal information has become a critical issue, and Lehigh researchers are working to strengthen systems and bolster user trust in a variety of ways.

Demonstrating the problem

The goal in privacy research, says Wang, is to raise people’s level of concern by making them aware of the risk. In one area of his systems-based work, Wang and his team use data mining and machine learning tools to understand and quantify privacy risk.

Many online systems request basic personal information, such as age, sex and zip code, but allow users to operate under pseudonyms or avatars, providing a sense of privacy. In not providing a full name, people assume they can’t be identified.

“Many think this is effective protection,” says Wang. “[But] there are attacks that can break the protection and reveal some sensitive information about people.”

Even without immediate access to names, an attacker can use what’s known as a “linking attack,” connecting one particular dataset with public datasets such as voter registration lists. Correlating the datasets can reveal user identities. In fact, says Wang, 98 percent of U.S. citizens can be uniquely identified by just three attributes: zip code, age and gender. In the case of medical information, correlating data can reveal such private details as an individual’s disease or other health affliction.

In a recent paper currently under submission, Wang and colleagues Shouling Ji, Qinchen Gu and Raheem Beyah, all of the Georgia Institute of Technology, demonstrate the vulnerabilities within online health forums. They introduce in the study a new online health data de-anonymization (DA) framework, which they call De-Health. De-Health identifies a candidate set for each anonymized user in a forum and then de-anonymizes each user to a user in its candidate set. Applying De-Health to user-generated datasets from popular forums WebMD and HealthBoards, the researchers linked hundreds of anonymized users to real-world people and their personal information, including full names, health information, birthdates and phone numbers.

Wang and his colleagues hope De-Health will help researchers and policymakers improve the anonymization techniques and privacy policies utilized on websites like these.

Beyond exploring risk, Wang focuses on building technical tools that can help people understand the potential privacy leakage, or privacy price, of the things they do and say online. He also investigates protection mechanisms that will help individuals control who has access to their information. There’s a balance, he says, in the amount of information shared and the service or results received.

“There’s no free lunch with privacy,” says Wang. “Companies need information to do personalized service. Everyone understands that. But no one understands [if] the information is too much, if it reveals too much about themselves. ... Society lacks an understanding of how the system of data use works.”

Hiding information

Even with an awareness of the risks, sensitive information still finds its way onto the Internet. Mooi Choo Chuah, professor of computer science and engineering, studies ways to protect that data and defend it from network-based attacks.

“Every time you have new changes in an operating system, you tend to have some vulnerability, and the minute the attackers know that there is such vulnerability, they will launch an attack,” says Chuah.

Chuah’s research includes mobile computing, mobile healthcare, disruption-tolerant network design, and network security. Another focus involves the design of data mining techniques over encrypted data. Encryption is particularly useful in the healthcare sector. In clinics and hospitals, data mining of patients’ data allows for more effective, personalized treatment, but unencrypted data stored on computers are subjected to sensitive information leakage when such systems are breached. Thus, it makes more sense for the data to stay encrypted until it is accessed by applications.

In terms of individual patient service, Chuah has designed a protocol in which the data of a patient with diabetes, for example, can be encrypted such that only his or her primary care physician and endocrinologists who specialize in diabetes at affiliate hospitals can open it. This attribute-based encryption, which allows only individuals with defined characteristics to open the data, enables patients to determine who can see their information.

Chuah’s encryption design also enables healthcare providers to learn how to better serve a patient without risking the safety of patient data. Patient information, accessed through an information-sharing coalition with other hospitals, can allow for improved service, says Chuah. When encrypted using Chuah’s design, sensitive data can be shared and explored for patterns, but only those authorized users with the appropriate keys can view the actual data. This allows for data mining without the risk of information leakage.

“[It’s] the safest way [to perform data mining],” says Chuah. “You can outsource your computation to a public cloud like Amazon, but they have no idea what your data is because it’s in encrypted form.”

Disguising information flow

Data is not the only thing that can divulge information about users. The way it flows reveals quite a bit as well.

Suppose, for example, you log in to your bank account. The data associated with that account is encrypted, but the fact that you have an account with that bank is not a secret.

“That is sensitive information,” says Parv Venkitasubramaniam, associate professor of electrical and computer engineering. “Nobody has a right to know which bank you bank with. [Privacy is essential because] any information flow and any communication in cyberspace cannot be without an element of sensitive information. ... What we are looking at is even if the information is encrypted, there’s still something that’s being leaked implicitly.”

Even if someone isn’t able to read a message shared between two individuals, that person can still know two people are communicating, and the timing of how the message is sent tells a story all its own. That, says Venkitasubramaniam, is a violation of privacy as well. His solution is to change how the information flows.

One focus of Venkitasubramaniam’s research is user anonymity in wireless networks. He seeks to understand how to prevent information leakage through transmission timing. Venkitasubramaniam uses the analogy of sending a letter via the postal system to explain the concept: When one person sends a letter to another, he or she prints the recipient’s name and address and the sender’s own name and address on the envelope. The message itself is hidden, but the sender, recipient and postmark are obvious to an observer. Similarly, in a wireless network, transmission timing can reveal information about the sender and receiver as well as the routes of information flow.

To disguise the journey of the letter, the sender might put the envelope inside another envelope and place that one inside yet another envelope, addressing each envelope to a different recipient. Each recipient will in turn mail the letter to the next, unaware of the start or end points of the communication, and eventually the letter will reach its intended recipient. This method provides information flow security, but it comes at a cost: time. The same is true with electronic transmission in a wireless network, so Venkitasubramaniam’s work also involves understanding a fundamental trade-off: the price paid for information flow security in terms of increased delay, network bandwidth consumption and additional use of resources.

Venkitasubramaniam’s graduate research focused on communication and signal processing methodologies, a more mathematical approach. Information flow security is a good match, he says, because it involves changing the protocol, and protocol changes affect the performance metrics of a network. His current research is primarily theoretical. He and his team attempt to drive an algorithm, changing control variables to understand how to best achieve the desired network performance while minimizing information retrieval from transmission timing.

When it comes to privacy, Venkitasubramaniam says, it all depends on the expectations of individuals, who tend to operate on a scale.

“On the one end, you have people who share every moment of their lives on social media, and then you have those who are nonexistent on social media,” he says. “You have to develop a system that caters to all of them. In that sense, the way we try to address this challenge is by having some sort of fluid metric for privacy and claim that, depending on where you stand on the scale, this is the kind of performance you can expect from the network.”

Denying access

The problem of security doesn’t arise only when communicating online. Personal data is at risk even in brick-and-mortar stores.

When customers in Target stores swiped their credit and debit cards at the height of the 2013 holiday shopping season, they were unaware their confidential customer data would fall into the hands of thieves. Millions of Target shoppers were affected by this large-scale breach, and the company later revealed that the personal information of millions more had also been stolen. The company lost millions of dollars in addition to the trust of many customers.

Yinzhi Cao, assistant professor of computer science and engineering, thinks a defensive measure against this kind of attack can be the card itself.

Cao and his colleagues, Xiang Pan and Yan Chen, both of Northwestern University, developed SafePay, “a system that transforms disposable credit card information to electrical current and drives a magnetic card chip to simulate the behavior of a physical magnetic card.” SafePay is a low-cost, user-friendly solution that would be compatible with existing card readers while preventing information leakage through fake magnetic card readers or skimming devices.

SafePay utilizes a bank server application, a mobile banking application and a magnetic card chip. The user downloads and executes the mobile banking app, which communicates with the bank server. During transactions, the bank server provides the mobile app with disposable credit card numbers, which expire after a limited time or even one use. In the absence of wireless Internet access, the app can collect and store several disposable card numbers for future use.

Once it has a card number, the mobile app generates an audio file in wave format and plays the file to generate electrical current, which connects with the magnetic card chip via an audio jack or Bluetooth. The magnetic card chip functions like a magnetic card stripe on a traditional credit card, making it compatible with existing card readers.

Test results show that SafePay can be used successfully in all tested real-world scenarios. The team, whose work was supported by funding from Qatar National Research Fund and the National Science Foundation, envisions banks distributing the SafePay device to users in an effort to safeguard account information.

Recognizing attacks

In 2013, a man in New Jersey using an illegal GPS jamming device to hide his location from his employer inadvertently interfered with the satellite-based tracking system used by air traffic controllers at Newark Liberty International Airport. The relatively inexpensive jamming device confused the airport’s radar, which sends out a signal that bounces off an airplane to determine its location.

This type of attack, though in this case unintentional, prevents air traffic control from receiving signals from airplanes. However disruptive, it’s easy to recognize—when nothing works, it’s obvious that something is wrong.

Rick Blum, the Robert W. Wieseman Professor of Electrical Engineering and professor of electrical and computer engineering, examines far more sophisticated attacks on sensor systems and networks. Through U.S. Army Research Office funding, Blum works to develop the fundamental theory of cyberattacks on sensors employing digital communications while advancing state-of-the-art design and analysis approaches for estimation algorithms under attack.

Sensors, which can be as complicated as radar and as simple as a thermostat, collect and respond to data. Like anything connected to a wireless network, sensors can be compromised. An attacker can, for example, employ a spoofing attack to slightly modify the data going into a sensor without being detected. In the case of an airplane, someone might tamper with the delay of the radar signal returning to air traffic control. In another type of attack, known as a man-in-the-middle attack, an attacker can modify the data coming out of a sensor by physically taking it over or by intercepting communications.

The first step in protection, says Blum, is to make sure the signals received are what you’d expect.

“The signal coming from this airplane has got to look like the signal I sent, but delayed,” he explains. “If it looks really different, then somebody did something. ... [But] with sophisticated attacks, you really have to be more sophisticated.”

Devices called “bad data detectors” serve as a level of defense, determining whether or not data looks the way it should. Still, some bad data can pass through the detectors. Consistency here is key.

“The data that one sensor is getting should be consistent with the data the other sensor is getting,” says Blum. “If it’s not, you can see it by looking at it. It’s really good to have lots of sensors because they can’t attack them all. You have to look at things that are going on in different places and think, ‘Are they consistent?’ We have mathematical models for what the sensor data should look like, and we can sort of say, ‘Okay, that’s really way too different.’”

With enough data and time samples at each sensor, Blum and his team can determine which sensors have been attacked. They also conducted mathematical analyses to determine what to do with data that has been compromised—whether it’s safe to use to make an estimation or if it should be discarded. The risk of sensor attacks increases with the rise of the “Internet of Things,” the network of physical objects that collect and exchange data via computational elements.

“The Internet of Things says that we’re going to have sensors all over the place—in our homes, in our cars. ... We have to figure out how to protect it,” Blum says.

Defending cyber-physical systems As connections between cyber systems and physical systems continue to develop, much more than information can be threatened. When linked by communication networks, critical infrastructure is at risk as well.

Take the U.S. electrical power grid, for example. Advanced electricity systems, or “smart grids,” help make our use of energy more technically and economically efficient as well as more environmentally friendly. In the past, says Chuah, a large-scale outage would occur because of a local substation disturbance known only by the local control center. Because power lines are interconnected and the stations didn’t communicate, other stations didn’t receive warning and couldn’t prepare ahead of time. Smart grids help prevent that.

“You can collect data regarding the current and the voltage going through all these transmission lines, and that data can be sent to remote power centers to provide a more global view to look at the health of the power systems,” says Chuah. “But, unfortunately, anytime you put smart meters on a network—especially over a wireless network—it’s bound to be compromised.”

An attack on the smart grid targets more than data—it targets the system itself. A malicious user with access to the system might embed a virus within it or access sensitive customer information, but attackers might also inject false data into the power grid system, prompting incorrect and potentially devastating control decisions.

Blum directs Lehigh’s Integrated Networks for Electricity (INE) cluster, a team of engineers, mathematicians and economists dedicated to research and education on advanced electricity networks. Nine cluster members compose a Lehigh team that has partnered with four other universities—the University of Arkansas, the University of Arkansas at Little Rock, Carnegie Mellon University and Florida International University—and has received a $12.2 million grant from the U.S. Department of Energy to develop and test new technologies to modernize and protect the U.S. electrical power grid. Participating Lehigh cluster members are Blum, who serves as the team’s principal investigator; Chuah; Venkitasubramaniam; Liang Cheng, associate professor of computer science and engineering; Boris Defourny, assistant professor of industrial and systems engineering; Shalinee Kishore, associate professor of electrical and computer engineering; Alberto Lamadrid, assistant professor of economics in the College of Business and Economics; Wenxin Liu, assistant professor of electrical and computer engineering; and Larry Snyder, associate professor of industrial and systems engineering.

The Lehigh team will develop and test algorithms to protect core power grid controls and operations, incorporate security and privacy protection into grid components and services, protect communications infrastructure, and execute security testing and validation to evaluate the efficacy of the measures they implement.

Making no assumptions

Lopresti, whose research has included electronic voter systems and biometric security, emphasizes vigilance in research and practice. The problem of hackers and other data thieves, he says, isn’t going away, so researchers at Lehigh and elsewhere will need to continue their work to throw up new cyber roadblocks.

“Security researchers don’t typically build something and sit back and claim that it’s secure,” he says. “That’s pretty rare. Sometimes you can claim security if you create mathematical models and then within that mathematical framework prove it’s secure, but then those models have to be implemented, and invariably that’s where the problems arise. It’s not that the math is wrong—the math is right—but you put it out in the real world and there are 50 other ways it can be broken.”

Biometrics, he notes, were once considered the final solution for user authentication. But, as he’s proved in some of his research, even if a security measure appears to work, it might have some serious flaws.

And so the work continues.

“If the systems in use aren’t trustworthy, people won’t use them,” he says. “If you’ve ever been in an elevator and it shakes, it’s like, ‘I’m using the stairs from now on.’ That’s exactly what we’re talking about. It’s very, very much like that.”

Posted on: